Spatial Computing made our list for Top Tech Trends to watch in 2020 and it's clear Microsoft is leading the way. In this article we discuss the powerful new spatial understanding capabilities of the Microsoft's mixed reality device, HoloLens 2.

The HoloLens 2 made its debut in early 2019 at the Mobile World Congress (MWC) Barcelona and is the most sophisticated Spatial Computing device on the market. As one of our Top 5 Trends for 2020, we believe Spatial Computing will be the next wave of disruptive technologies that will get it’s foothold in the 2020s. HoloLens 2 not only leads the way as a groundbreaking Mixed Reality tool but also, a powerful intelligent edge device packed with sensors and computing power that can run advanced computer vision algorithms with AI in real-time.

What sets the HoloLens 2 apart is that it cannot only track itself in the physical world to make sure the virtual objects stay in place, but also to sense the environment via Spatial Mapping. Spatial Mapping is vital in creating amazing and believable immersive experiences where virtual objects become part of the physical world, leveraging the spatial map to occlude virtual objects with physical objects.

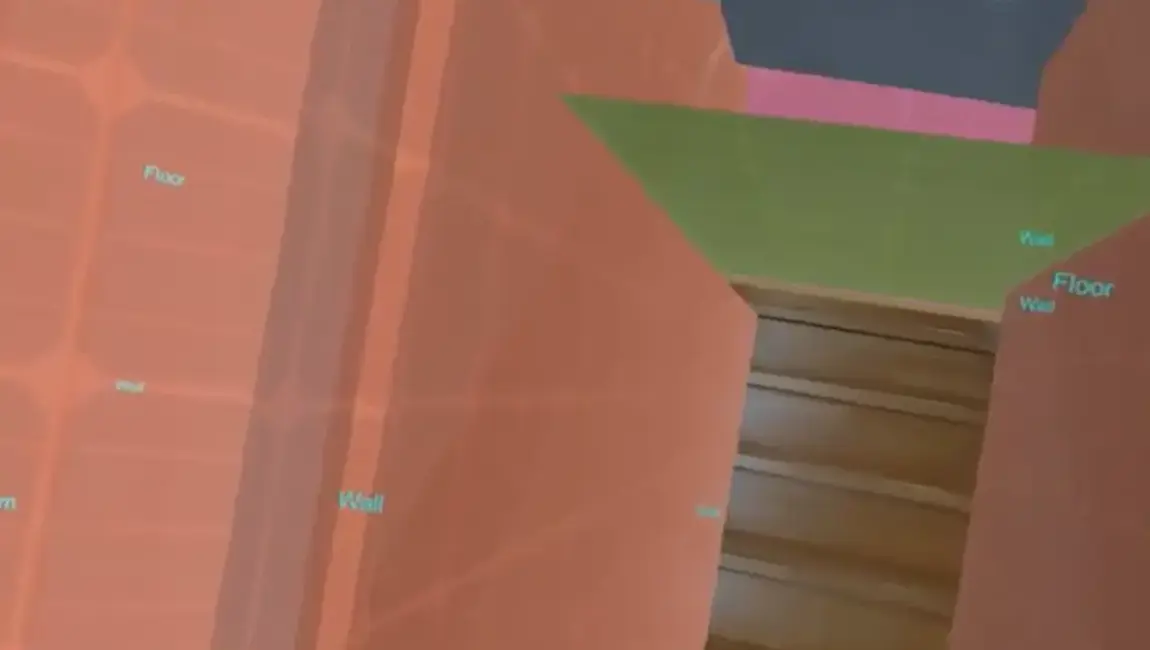

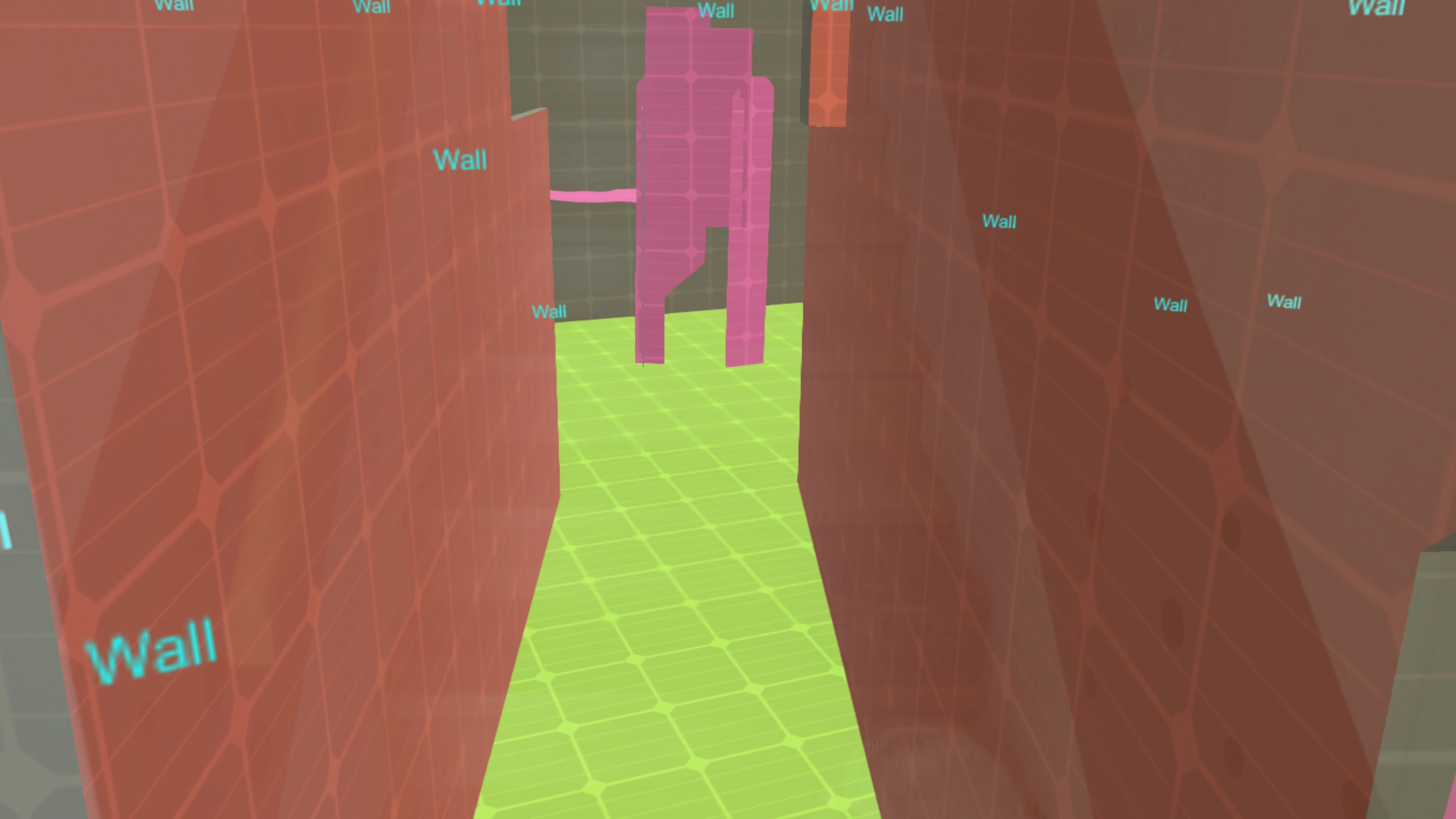

Scene Understanding in action with labeled real-world physical walls

Scene Understanding in action with labeled real-world physical walls

Although the Spatial mapping runtime provides information about the environment like where objects are, it does not provide information on what these objects are. This is where the new Scene Understanding capabilities of the HoloLens 2 come into play to process the spatial mapping data further for semantic segmentation to understand the spatial map. The Scene Understanding runtime takes the spatial map and runs it through advanced AI models that segment and classify the spatial map objects and fill in gaps to create watertight meshes. This structured data can then be used for better real-world occlusion, physics interactions, navigation or further visualization of larger areas beyond the spatial mapping cache.

The Scene Understanding SDK allows developers to leverage the Scene Understanding runtime and to integrate these advanced semantic understanding capabilities into their applications. The HoloLens 1 had the option to use Spatial Understanding and plane finding libraries to get similar contextual information. However, the new HoloLens 2 Scene Understanding leverages the Mixed Reality runtime and can therefore run more efficiently, directly on the device and provide even better and more data.

Sound complicated? Watch the short video below, where I walk through the Scene Understanding SDK sample and show various ways to represent the data and even view a mini map of the whole scenery.

Have a Spatial Computing project in mind? We would love to hear about it! Valorem Reply can help you reach your innovation goals quickly and efficiently. Reach out to us as marketing@valorem.com to schedule time with one of our industry experts.